Somewhere in your LMS right now, there’s a module someone on your team generated with an AI tool. You may not know which one. The person who generated it may not still be on the team. An SME reviewed it, signed off, and it went live. It reads well. It’s current. By every standard the training function normally applies, it’s fine.

Then an auditor asks where the content came from.

They want to see the source documents behind it. They want the dates on those documents. They want to see how the validation was done, by whom, against what, and whether that process matched the one applied to the module it replaced. They want a line they can follow from the source document to the words on the screen.

This is the part of AI-enabled training that’s moving faster than the conversation about it. Content gets generated. It gets reviewed. It goes live. From the outside, the workflow looks like the old one: draft, review, approve, publish. What’s changed is that the thing doing the drafting doesn’t retain its sources unless the workflow was built to capture them.

In some industries that’s a quality problem. In regulated ones, it has sharper consequences. Training in those environments functions as a compliance record. The frameworks vary by sector. OSHA and ISO in manufacturing, FDA in pharma, FINRA in financial services, HIPAA in healthcare. But the documentation demands converge: what was trained, when, how it was validated, who signed off.

AI-generated content without that trail is the kind of thing auditors flag. The consequences don’t stop at a quality note in a review file. They land as findings, remediation demands, and exposure in the next external audit.

Manufacturing is where this shows up most sharply. The worker who follows the wrong procedure doesn’t miss a quota. They create a safety incident, a quality escape, or a line stoppage. Training running six weeks behind a process change produces a predictable chain: incident, investigation, audit, question. Somewhere in that chain, someone asks where the training material came from and whether it was traceable to the current SOP. The answer decides whether the organisation is explaining an isolated error or a systemic one.

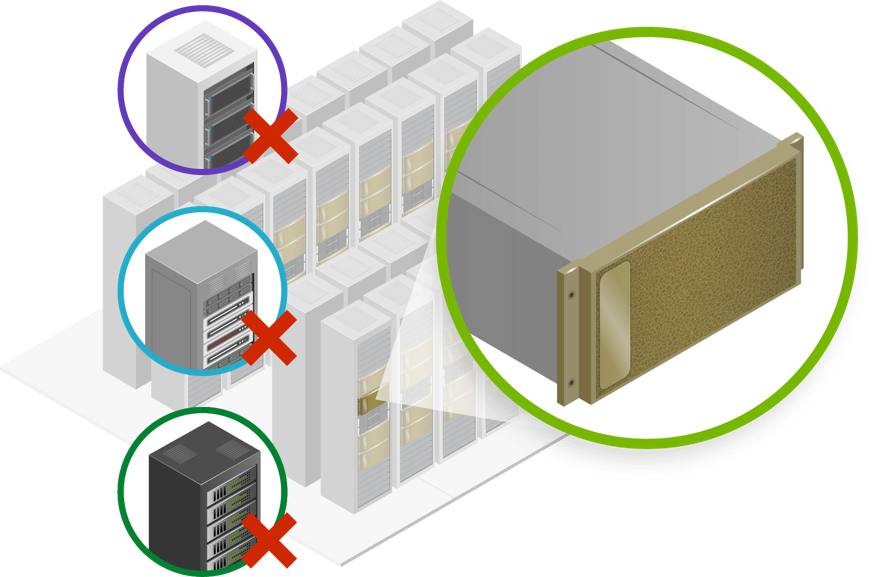

The teams holding up under audit started upstream of the drafting step. They rebuilt how source documents enter the workflow before they thought about what AI would produce from them. Every module carries a record of which SOP it was built from, which version, which engineer or SME validated it, and when. Updates go through the same validation as originals. When an auditor asks for the chain, someone can produce it that afternoon.

The teams that adopted AI only at the drafting step are in a different position. The output looks polished. The source often isn’t attached to it. A module gets revised in an afternoon, reviewed in an hour, and pushed live — no one checks whether that matches the validation standard applied to the original six quarters ago. More content goes live each quarter. Less of it has a chain anyone can reproduce.

Compliance preparation is usually where this surfaces. Or an incident investigation. Someone pulls the training record associated with a procedure, traces backward, and finds that three updates ago the chain went quiet. The content is live. Thousands of workers have completed it. Nobody can show the chain.

The conversation worth having is the one that happens before the next partner is engaged, not after. The questions aren’t complicated. Is traceability built into the workflow from source document to deployed content, or added as an afterthought? Does the validation applied to an updated module match the standard used for the original? Can the partner produce an audit trail through the full content development chain on demand? Have they built this capability inside a safety-critical environment, or adapted it from somewhere the consequences of getting it wrong were lower?

None of these slow the good version of AI-enabled training down. What they do is separate the version that holds up from the version that looks the same until someone asks to see the paperwork.

Most L&D leaders are past the question of whether to use AI in the training workflow. The question now is whether the workflow has been rebuilt to hold what AI produces — or whether AI has been bolted onto a process that was never built to carry it.

Sify Digital Learning combines AI-enabled production with the governance and traceability that regulated training demands. Learn more here.