Most manufacturing L&D leaders aren’t short of content. They’re short of content that reflects what’s actually happening on the floor right now — the process that changed last month, the equipment upgraded last quarter, the regulatory standard that shifted while the course covering it sat in a review queue.

That’s the problem AI is well-positioned to solve. Not training quality. Training currency.

The distinction matters. Here’s what changes when the workflow is rebuilt around it:

1. The lag between operational reality and training content shrinks to days:

When a process changes or new equipment is commissioned, technical documentation feeds directly into updated learning content — bypassing the sequential cycle of gathering, drafting, reviewing, approving, and republishing that turns a straightforward update into a six-week delay. The job aid a technician pulls up reflects the actual current procedure. The onboarding module a new hire completes reflects the floor they’re about to walk onto. That alignment, maintained consistently across a large and changing product portfolio, is what most training functions are trying to achieve and very few have actually built.

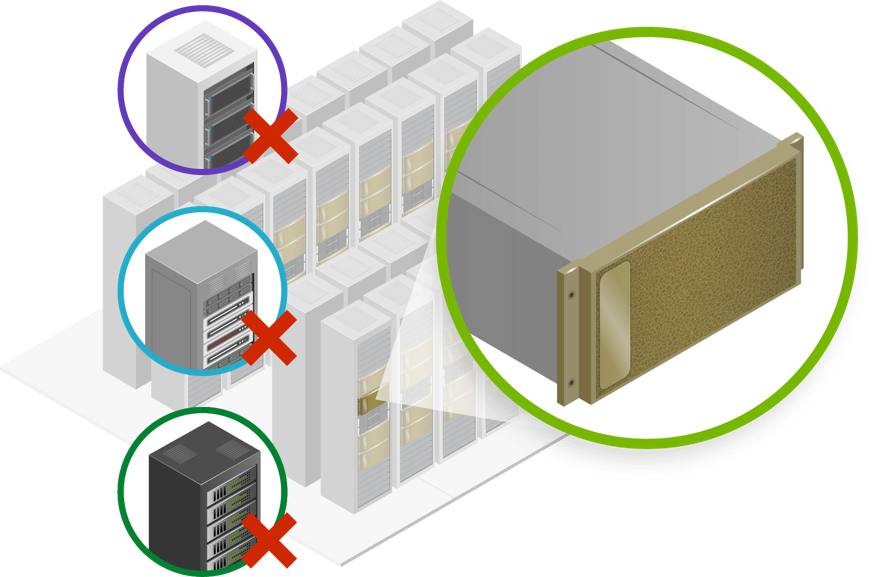

2. Generic programs give way to learning shaped by role:

A press operator and a CNC machinist working in the same facility carry different knowledge demands. A new hire on their third day and a veteran cross-training on a new line need different framing for the same information. Building that specificity manually, across dozens of roles and multiple sites, has never been practical. AI makes role-specific, experience-specific pathways achievable at scale — maintained and updated without rebuilding from scratch each time a role evolves or a product line changes.

3. Workers get the right procedure at the moment they need it:

A line stoppage doesn’t wait for a training session. A technician diagnosing a fault needs the current diagnostic procedure during the stoppage — on the floor, on whatever device they’re holding, in under a minute. AI enables structured, searchable, always-current knowledge access built for that moment. The difference between retrieving the correct isolation procedure in 45 seconds and going from memory isn’t a training quality problem. It’s a design and delivery problem, and it’s solvable.

4. AI-powered analytics ensure skills gaps are visible earlier:

Skills gaps have traditionally surfaced when performance fails — a near-miss, a quality escape, a stoppage traced back to a procedure someone misunderstood. AI-powered analytics change what’s visible before that point: which procedures generate consistent errors across a cohort, which teams are underprepared ahead of an equipment rollout, which individuals need targeted support before a task clearance. The shift is from investigating after something goes wrong to intervening while there’s still time to act.

5. Readiness gets built before the change arrives:

New equipment arrives with a commissioning date. A regulatory update comes with an enforcement deadline. These are known events, often with weeks of lead time, yet most training functions respond to them after the fact — the change lands, the team is notified, the development cycle starts. With AI in the workflow, that lead time becomes usable. Training gets built against the documentation for the new process before it goes live, so workers arrive to a changed environment with content that already reflects it. First-week errors on a new line drop. The certification that should have covered the new standard actually does.

Those five things are real. They’re also not the full picture.

AI that accelerates content production without instructional design behind it produces faster mediocrity. A worker who passed the module but can’t apply the procedure under pressure isn’t better prepared. They may be more dangerous — carrying the confidence of someone who passed without the competency to back it.

What determines whether training actually changes performance has nothing to do with how quickly it was produced. It has to do with the questions asked before a single piece of content was built. What decisions will this worker face on the line? What does a high performer do differently during a fault condition? What is the cost – in safety, quality, or downtime – of getting this specific procedure wrong?

Those are design questions. AI doesn’t ask them. The L&D leaders getting this right have separated the production problem from the design problem — using AI to solve the former aggressively, while protecting the time and judgment required to solve the latter well.

Speed at the point of creation is worth very little if the questions that should precede it were never asked.