A lot of L&D teams have a success story to tell. A pilot that ran well. A piece of content produced faster than expected. A proof of concept that got stakeholder buy-in. The problem comes next – when they try to repeat it across the wider organisation and it falls apart.

This isn’t a failure of ambition. It’s a sequencing problem. And it’s more common than most people admit.

What makes a pilot succeed is not what makes a system scale

Pilots work because the conditions are controlled. A small team, a clear use case, a motivated group of people willing to work around the gaps. When something doesn’t connect, someone fixes it manually. When the output isn’t quite right, someone cleans it up. The pilot succeeds because people absorb the friction.

At scale, that friction becomes the bottleneck.

The manual steps that were invisible in a 6-week pilot become full-time jobs when you’re running ten projects at once. The quality checks that one diligent person handled quietly can’t stretch across a whole department. The AI tools that produced decent output in isolation start producing inconsistent results when different teams use them in different ways with no shared standards.

The pilot didn’t lie to you. It just didn’t show you everything.

The real reason scale fails

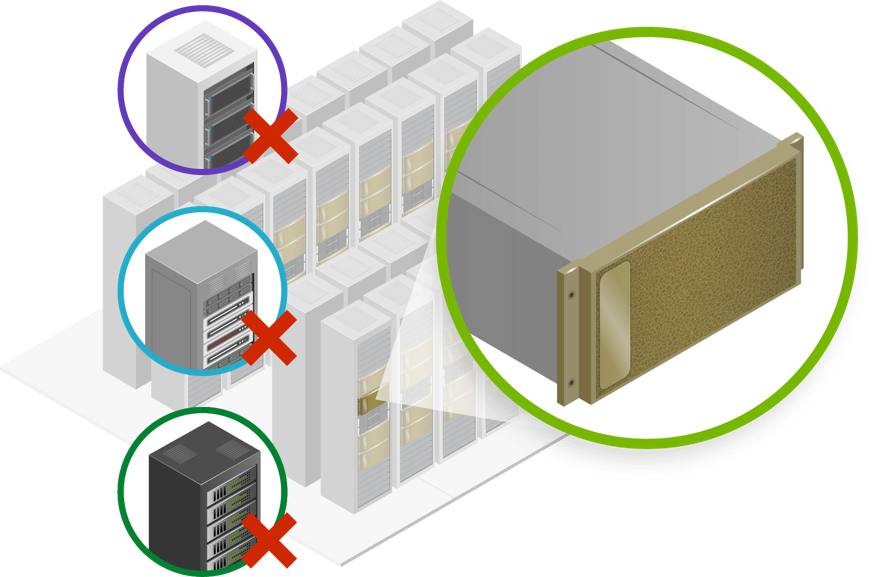

Most organisations adopt AI tools before they have redesigned the workflows those tools sit inside. That’s the root of it.

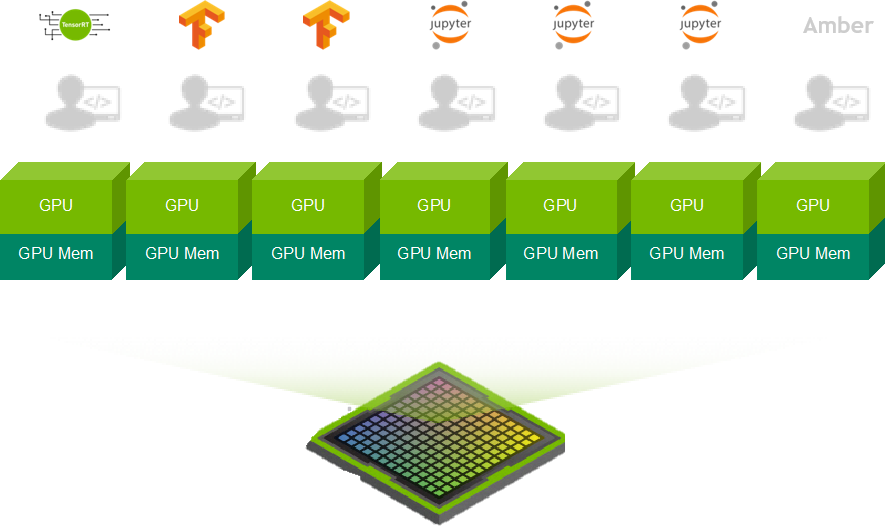

A text generator here, a video tool there, a translation platform somewhere else. Each one useful on its own, but none of them connected. The result is a production process that still depends on people to bridge the gaps between tools, reconcile different output formats, and maintain quality by hand.

According to recent analyst research, only 4% of organisations have actually redesigned how they work around AI. That means the vast majority are running new tools inside old systems and wondering why they can’t move faster.

What a scalable system actually requires

Scaling AI in L&D isn’t about adding more tools. It’s about connecting the ones you have into a system that can run consistently without heroic effort from individuals.

That means three things need to be in place.

- A clean content foundation. If the source material going into your AI pipeline is inconsistent – different formats, missing metadata, outdated versions sitting alongside current ones – the outputs will reflect that. Inconsistent inputs produce unreliable outputs, every time, at any scale. Fixing this before you scale is far less painful than fixing it after.

- A connected production workflow. Each stage of content production needs to feed the next without manual intervention. That means your storyboard output can flow into your course build, your course build connects to your quality checks, and your quality checks feed into publishing. When those steps are disconnected, every handoff is a potential failure point.

- Traceability at every stage. At scale, you can’t have a person manually verify every piece of content. What you can do is build a system that tracks where content came from, flags where the AI was uncertain, and surfaces the right things for human review. That’s the difference between a quality process that scales and one that relies on individuals not dropping the ball.

The question worth asking now

If your pilot worked, that’s a good signal. It means the use case is real and the technology can deliver. But before pushing for a wider rollout, it’s worth being honest about why it worked.

Was it because the system was solid? Or was it because a small, motivated team absorbed the gaps?

If the answer is the latter, the next step isn’t to scale the pilot. It’s to build the system that makes the pilot repeatable.

We get into this in detail in our recent webinar, including a case study that shows what this looks like in practice.Click Here to Watch the full session on YouTube

If you’d like to chat with us directly about your use case, our AI expert can jump on a call with you!

Just get in touch here.