How four technology companies broke through the limits of their learning delivery models.

The problem is rarely that the training is bad.

When we work with large technology companies on their learning programs, the content is usually solid. The subject matter experts know their material. The instructional intent is sound. People who attend the sessions leave better prepared than when they walked in.

The problem is that the delivery model has a ceiling. And the company has grown past it.

Maybe it’s certification training that’s technically rigorous but takes too long to produce across formats. Maybe it’s sales enablement content that’s accurate but buried in static repositories where nobody can find it when they need it. Maybe it’s ILT sessions that work well in a room but get recorded and uploaded as passive videos that don’t teach anyone anything. Maybe it’s an accreditation process that tests knowledge but can’t confirm whether someone is actually ready to deliver.

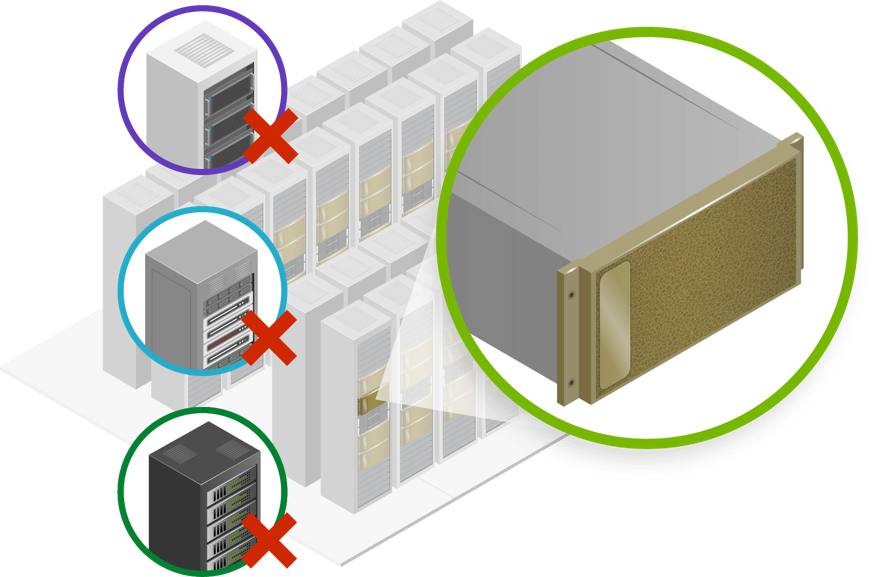

These aren’t content problems. They’re architecture problems. The knowledge exists. The way it gets delivered can’t carry it where it needs to go.

The usual fixes don’t help much. Recording the classroom session doesn’t make it eLearning. Uploading the PDF doesn’t make it accessible. Hiring more trainers doesn’t fix a model that depends on everyone being in the same room at the same time. These are patches on a structure that needs redesigning.

What we do about it

We don’t replace what works. We redesign how it’s delivered so it can grow.

That sounds simple, but the work behind it isn’t. It means understanding both the instructional intent and the operational constraints. It means figuring out which parts of a live training experience carry the real learning value, and which parts are just artifacts of the format. It means building systems that a client’s team can maintain and evolve on their own, without coming back to us for every update.

We’ve done this work across four engagements with global technology companies. Each one started from a different pressure point. Each one arrived at a different solution. But the underlying challenge was the same every time: good training that couldn’t grow.

Four companies, one pattern

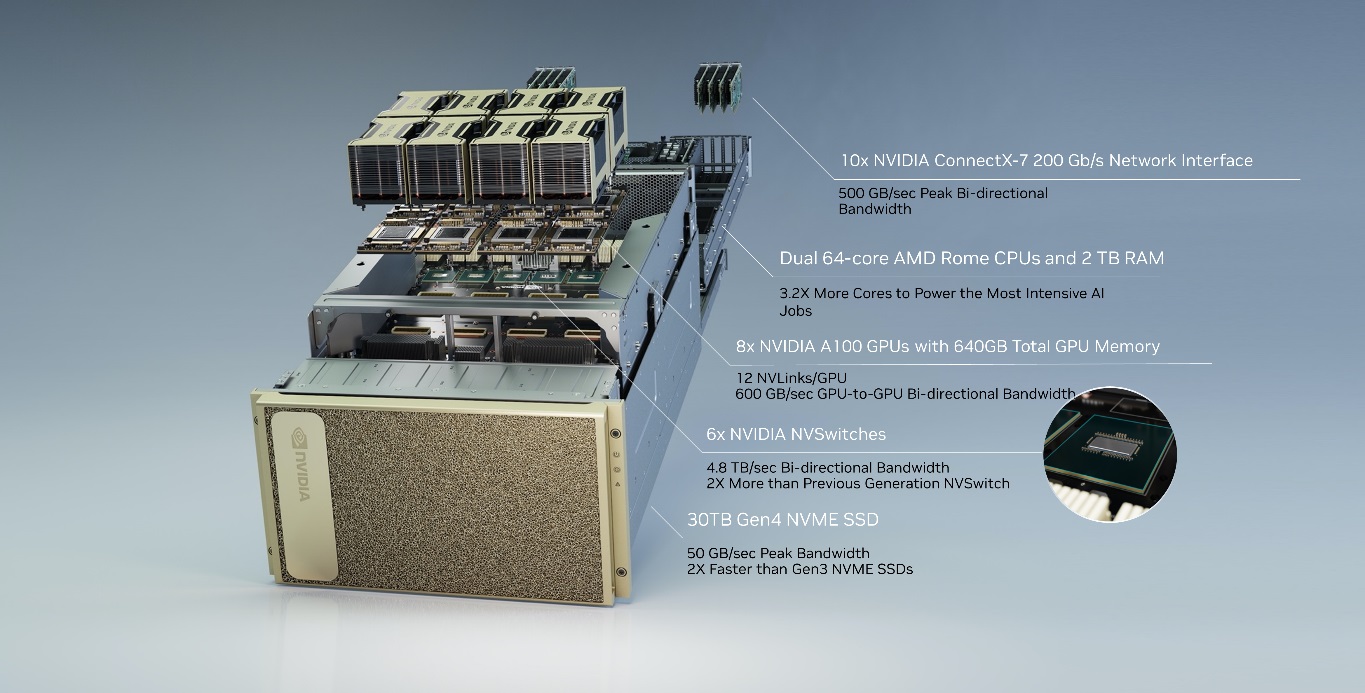

- A global networking and technology leader with one of the industry’s most recognized certification ecosystems needed training produced at volume across instructor-led, eLearning, mobile, and quick-reference formats. All of it had to meet the technical bar set by their highest-tier certified experts. The constraint was orchestration: maintaining accuracy, instructional quality, and speed across a rapidly expanding technology portfolio. We built a co-development model that paired the client’s certified SMEs with our instructional design team, producing over 300 hours of multi-format content. The program won two Brandon Hall Awards for Innovative Technology in Learning.

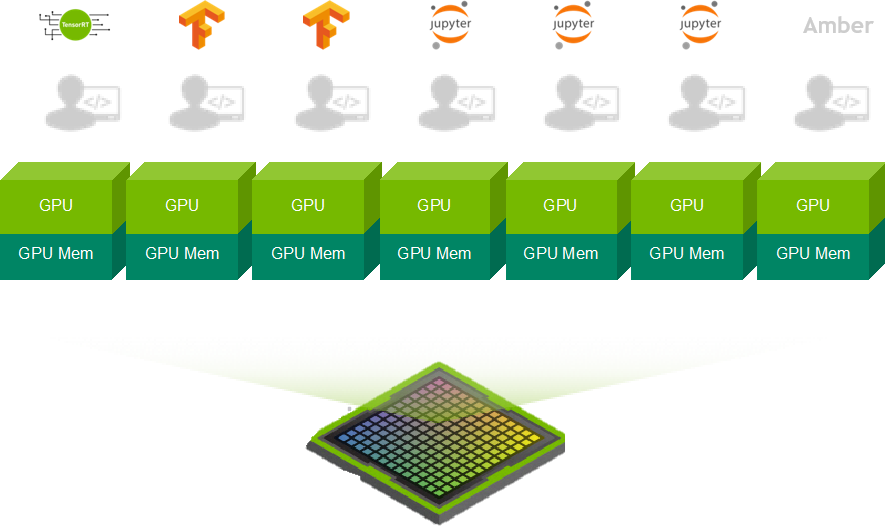

- A global digital infrastructure company expanding its services portfolio and worldwide sales footprint had a dual problem. Sales teams couldn’t find enablement material fast enough in the flow of work, and certification training was locked in instructor-led formats that didn’t scale. We addressed both sides: a conversational chatbot platform that gives sales teams instant, searchable access to enablement resources, and a structured conversion of classroom certification training into self-paced digital learning with active exercises, job aids, and scenario-based assessments.

- A major cloud services partner organization had built its training program around live events. Strong content was delivered once, then it disappeared. The online versions were ILT recordings: long, passive, and hard to update. We transformed that event-driven approach into 115 eLearning modules across 6 role-based learning journeys and 8+ curriculum streams, built on an 18-month roadmap designed for continuous reuse and refresh.

- A global collaboration software company with a fast-growing partner ecosystem faced a different kind of ceiling. Partners passed certification exams, but the exams couldn’t confirm whether a team could execute an enterprise cloud migration under real conditions. We designed a three-stage accreditation model with a capstone simulation lab that gives partners a strict 20-hour migration window, complete with intentional challenges engineered into the backend data. Partners who complete it emerge accredited and field-ready.

What this adds up to

Four engagements. Four different starting points. But the same underlying story: a company with learning that works, a delivery model that can’t keep up, and a partner who rebuilds the architecture so the program can keep climbing.

These are not short projects. They’re ongoing relationships where the solution evolves as the client’s business evolves. The chatbot platform grows as the infrastructure company adds new products and services. The accreditation simulation gets harder as each cohort provides feedback. The cloud learning library expands as new services launch. The certification co-development model scales across new technology pathways.

That’s the part that matters most to us. We don’t build things that sit still. We build things that keep working after we step back, and keep growing as the business demands more.

If your learning program has hit its ceiling, the answer probably isn’t more content. It’s better architecture. That’s where we come in.