Table of contents

As artificial intelligence moves from experimentation to enterprise-wide deployment, cloud performance optimization is becoming a defining factor in whether AI delivers sustained business value or turns into an unpredictable cost driver.

AI models now influence customer experience, operational decision-making, fraud detection, and risk management. In this environment, performance inconsistency does not appear as a technical inconvenience — it shows up as delayed decisions, uneven service quality, and volatile operating costs. That is why cloud performance optimization must be treated as a business capability, not a backend tuning exercise.

Yet many enterprises still struggle to extract consistent value from AI at scale. The issue is rarely a lack of cloud infrastructure. It is how performance is designed, governed, and adapted to different AI systems and cloud operating models.

Recommended read: AI-powered cloud services: A CxO’s guide to intelligent cloud transformation

The Core Failure in Cloud Performance Optimization for AI

The most common failure in cloud performance optimization is optimizing infrastructure behavior instead of AI outcomes.

Enterprises often measure success through utilization rates, instance efficiency, or cost-per-resource. While these metrics matter operationally, they say little about whether AI systems are delivering consistent inference, timely insights, or reliable business decisions.

This gap widens when performance ownership is fragmented. Infrastructure teams optimize capacity, data teams optimize pipelines, AI teams tune models, and finance teams manage spend — often without a shared definition of performance objectives or service-level outcomes that directly support business goals. Without unified accountability, AI platforms scale technically but underperform economically.

Three Misjudgements That May Limit AI Performance at Scale

Uniform cloud architectures for non-uniform AI workloads

AI workloads behave differently because they serve different business purposes. Model training is burst-driven and compute-intensive. Inference workloads demand deterministic latency and stable throughput. Retraining cycles depend on data velocity and model drift.

When these workloads are forced into a single cloud performance model, optimization becomes a compromise. Improvements in training efficiency may introduce inference instability, while broad cost controls may degrade performance where consistency matters most.

Reactive scaling instead of performance-led design

Many enterprises increase capacity only after performance degradation becomes visible — slower responses, longer processing windows, or missed internal benchmarks. By the time corrective action is taken, business impact has already occurred.

Performance-led organizations embed cloud performance optimization into architecture decisions early, ensuring AI systems meet performance thresholds by design rather than through post-facto remediation.

Cost optimization disconnected from performance governance

Cloud cost discipline is essential, but when cost optimization operates independently of performance governance, it introduces risk. Aggressive rightsizing or consolidation can reduce invoices while quietly increasing latency, throughput variance, or model instability.

In practice, this shifts cost from cloud bills to business outcomes — delayed insights, inconsistent customer experience, and reduced confidence in AI-driven decisions.

Also read: Cloud access control issues that expose critical workloads

Cloud Performance Optimization Across Different Cloud Models

One reason performance optimization fails is the assumption that a single cloud model can support all AI requirements.

In reality, performance characteristics vary significantly across cloud environments:

Private Cloud

Private cloud environments offer higher predictability, data control, and latency stability. They are well suited for regulated AI workloads, sensitive data processing, and performance-critical inference systems. However, without intelligent capacity planning, they can become cost-intensive.

Public Cloud

Public cloud provides elasticity and rapid experimentation. It is effective for AI training, experimentation, and burst workloads. The challenge lies in managing performance variability and cost spikes as AI workloads scale.

Hybrid Cloud

Hybrid cloud enables enterprises to align workloads to the right environment — keeping performance-sensitive inference or regulated AI in controlled environments, while leveraging public cloud for training and experimentation. This model reduces performance trade-offs but introduces operational complexity.

Multi-Cloud

Multi-cloud strategies are often adopted for resilience, vendor flexibility, or regional requirements. However, they amplify performance inconsistency and observability fragmentation if not governed through a unified performance framework.

Effective cloud performance optimization requires coordinated performance visibility and governance across all these environments, not isolated tuning within each.

How Cloud Performance Optimization Changes by AI System Type

Not all AI systems create value in the same way — and cloud performance optimization must adapt accordingly.

Predictive and Decision AI (fraud detection, risk scoring, forecasting)

These systems demand low latency, consistency, and explainability. Performance variance directly impacts business decisions and risk exposure.

Generative AI (content generation, copilots, conversational AI)

Generative models are compute-intensive and cost-sensitive. Optimization focuses on throughput, response-time consistency, and managing GPU utilization without runaway spend.

Real-Time AI (recommendation engines, personalization, dynamic pricing)

These systems require deterministic inference performance under fluctuating demand. Latency drift directly affects customer experience and revenue.

Operational AI (process automation, optimization engines)

Here, performance optimization emphasizes reliability, predictability, and integration across data pipelines rather than raw speed.

Enterprises that fail to differentiate performance strategies by AI system type often over-optimize one dimension while undermining another.

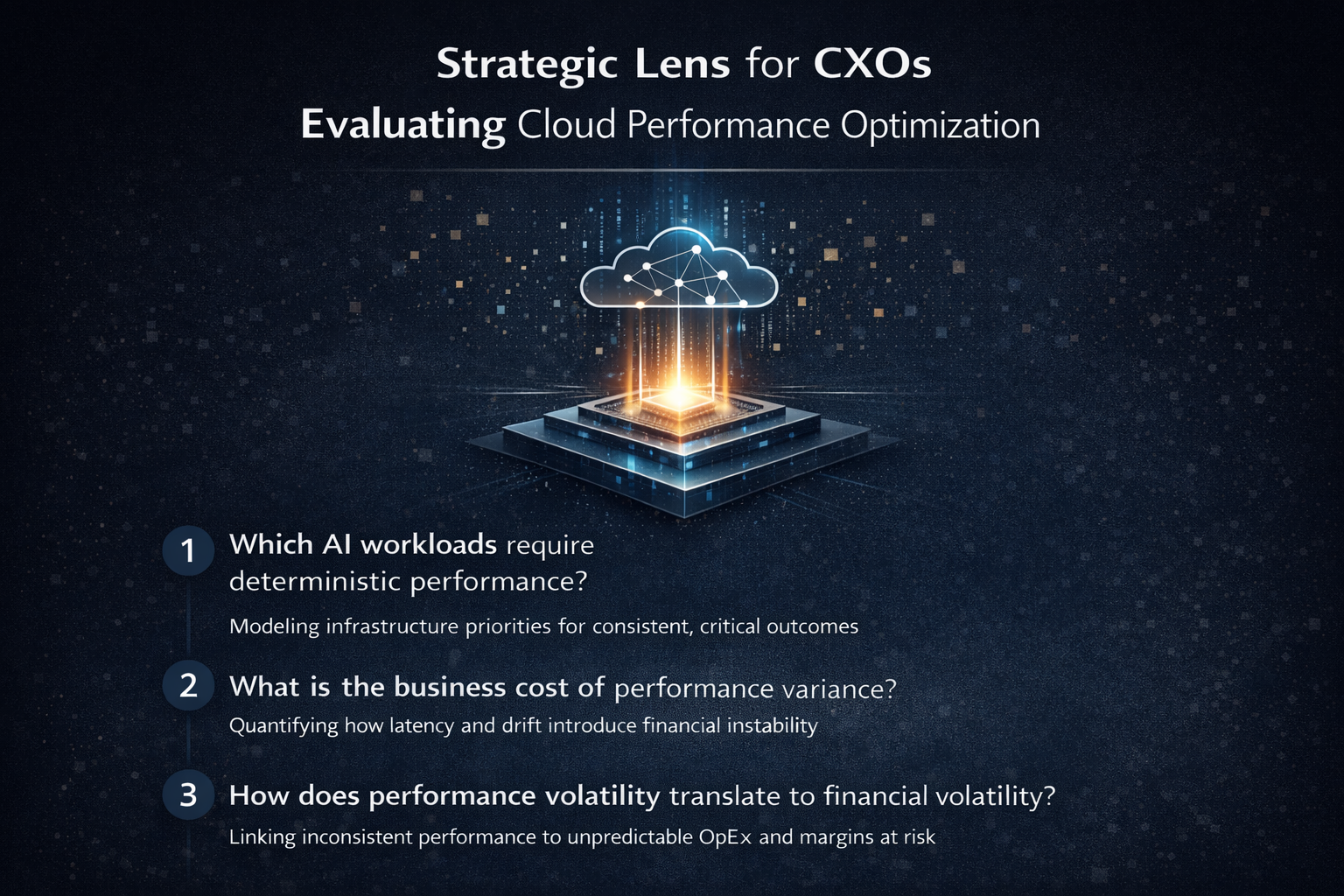

The CXO Lens for Evaluating Cloud Performance Optimization

Enterprises that extract durable value from AI evaluate cloud performance optimization through a business-first lens. The goal is not maximum efficiency, but predictable outcomes.

Which AI workloads require deterministic performance?

Not all AI workloads require the same guarantees. Identifying which systems cannot tolerate variance is a business decision, not a technical one.

What is the business cost of performance variance — not outages?

AI systems rarely fail outright. Subtle latency drift and throughput inconsistency often cause greater cumulative damage than downtime.

How does performance volatility translate into financial volatility?

Inconsistent performance inflates cloud consumption, disrupts forecasting, and destabilizes operating margins.

Through Sify’s CloudInfinit+AI services, enterprises witness 40% increase in productivity along with establishing a single source of truth by analyzing resource utilization and pattern of consumption.

Can AI platforms scale without repeated architectural disruption?

If growth requires constant redesign, performance optimization has failed.

What Effective Cloud Performance Optimization Looks Like in Practice

When done correctly, cloud performance optimization becomes structural rather than reactive.

- Outcome-aligned cloud design: Workloads are placed in environments aligned to performance criticality.

- Performance defined in business terms: SLAs focus on inference consistency, response latency, and decision timeliness.

- Unified observability: Performance visibility spans compute, data pipelines, networks, and cloud environments.

- Scaling driven by performance thresholds: Capacity expands based on outcome impact, not utilization averages.

Recommended read: Critical Cloud Security Challenges Every Enterprise Must Solve

Enterprise Outcomes When Optimization Is Done Right

When cloud performance optimization is aligned with enterprise priorities, the benefits compound:

- Faster AI deployment without unpredictable cost escalation

- Predictable operating expenditure under fluctuating AI demand

- Stable inference performance for customer-facing and risk-critical systems

- Reduced architectural churn as AI platforms evolve

AI shifts from being fragile at scale to dependable by design.

Recommended read: Cloud Governance Challenges That Put Enterprises at Risk

Why Sify: Cloud Performance Optimization Designed for Enterprise AI

Sify Technologies approaches cloud performance optimization as an enterprise-grade capability — engineered for predictability, governance, and long-term scale.

Sify’s cloud services are built to support performance-sensitive AI workloads through outcome-aligned architectures, integrated visibility across cloud and network layers, and governance-driven optimization models. This enables enterprises to scale AI platforms without repeated redesign or financial instability.

For organizations treating AI as a core business capability, cloud performance optimization must be intentional. Sify provides that foundation.

Speak to our experts today to learn more about cloud performance optimization.